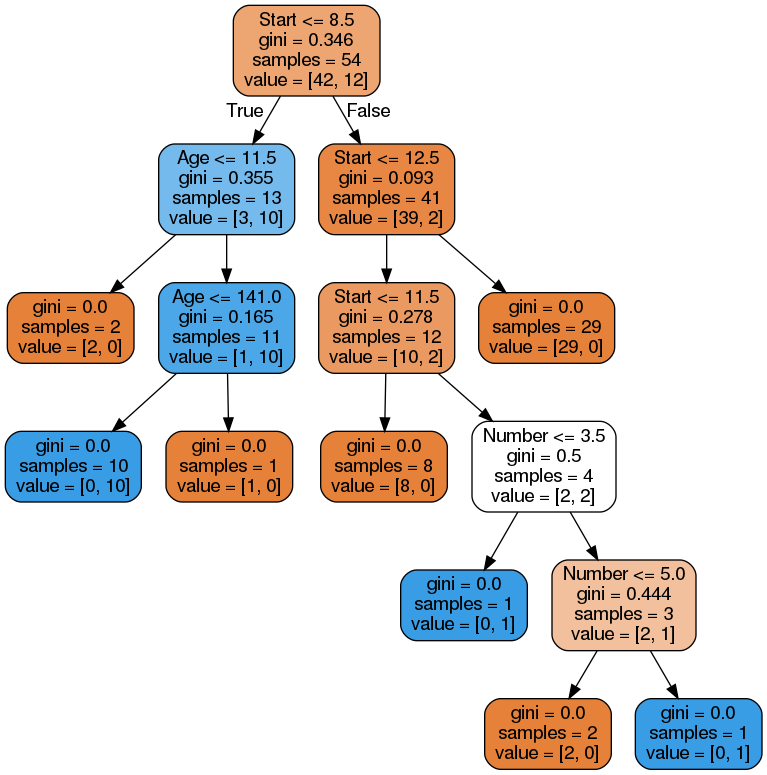

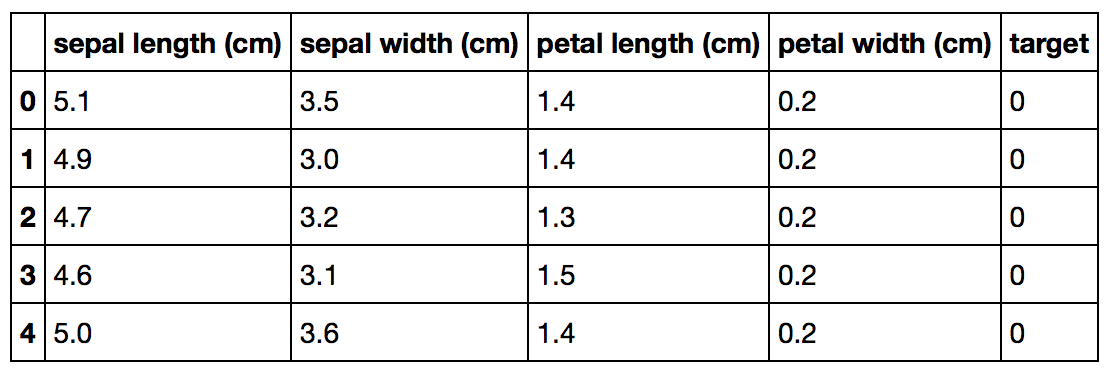

Each decision node compares a single feature's value in x, x i, with a specific split point value learned during training. For regression, similarity in a leaf means a low variance among target values and, for classification, it means that most or all targets are of a single class.Īny path from the root of the decision tree to a specific leaf predictor passes through a series of (internal) decision nodes. A decision tree carves up the feature space into groups of observations that share similar target values and each leaf represents one of these groups. For classifier trees, the prediction is a target category (represented as an integer in scikit), such as cancer or not-cancer.

For regression trees, the prediction is a value, such as price. (Notation: vectors are in bold and scalars are in italics.)Įach leaf in the decision tree is responsible for making a specific prediction.

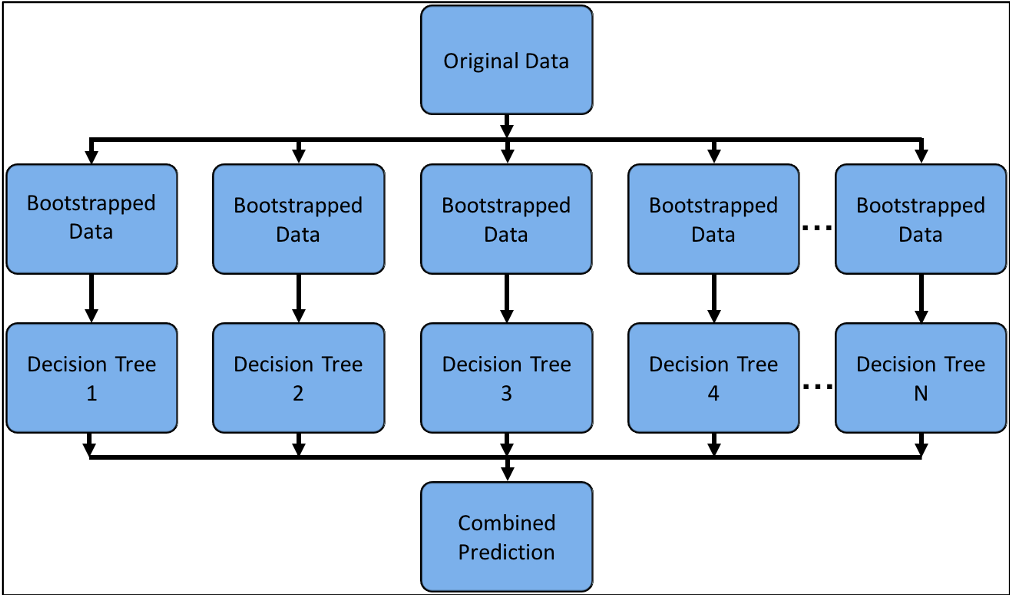

A decision tree learns the relationship between observations in a training set, represented as feature vectors x and target values y, by examining and condensing training data into a binary tree of interior nodes and leaf nodes. (If you're not familiar with decision trees, check out fast.ai's Introduction to Machine Learning for Coders MOOC.) 1.2 Decision tree reviewĪ decision tree is a machine learning model based upon binary trees (trees with at most a left and right child). We assume you're familiar with the basic mechanism of decision trees if you're interested in visualizing them, but let's start with a brief summary so that we're all using the same terminology. The visualization software is part of a nascent Python machine learning library called dtreeviz. This article demonstrates the results of this work, details the specific choices we made for visualization, and outlines the tools and techniques used in the implementation. Here's a sample visualization for a tiny decision tree (click to enlarge): So, we've created a general package for scikit-learn decision tree visualization and model interpretation, which we'll be using heavily in an upcoming machine learning book (written with Jeremy Howard). It is also uncommon for libraries to support visualizing a specific feature vector as it weaves down through a tree's decision nodes we could only find one image showing this. For example, we couldn't find a library that visualizes how decision nodes split up the feature space. Unfortunately, current visualization packages are rudimentary and not immediately helpful to the novice. Visualizing decision trees is a tremendous aid when learning how these models work and when interpreting models. Visualizations for purity and distributions for individual leaves.ĭecision trees are the fundamental building block of gradient boosting machines and Random Forests™, probably the two most popular machine learning models for structured data. A visualization of just the path from the root to a decision tree leaf.Īn explanation in English how a decision tree makes a prediction for a specific record. See dtreeviz_sklearn_visualisations.ipynb for examples. Beyond what is described in this article, the library now also includes the following features. Update July 2020 Tudor Lapusan has become a major contributor to dtreeviz and, thanks to his work, dtreeviz can now visualize XGBoost and Spark decision trees as well as sklearn.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed